Ever find yourself chatting with a virtual assistant or watching an app predict your next word? That’s the magic of Natural Language Processing (NLP) at work. It’s the art and science of enabling computers to not just process, but understand and even generate human language. Think of it as building a bridge between our messy, nuanced world of words and the logical, structured realm of machines. Instead of just seeing strings of characters, NLP allows computers to extract meaning, sentiment, and intention from text and speech.

But how does this digital alchemy actually happen? It’s a multi-layered process, involving several key steps and concepts. Here’s a simplified look under the hood:

1. Core NLP Tasks: The Building Blocks

-

Tokenization: The Art of Word Segmentation: Imagine trying to build a house without individual bricks. Tokenization is like breaking down a sentence into its fundamental units – tokens, which are typically words, but can also be punctuation or symbols. For example:

-

Input: “NLP is fascinating, isn’t it?”

-

Output: [“NLP”, “is”, “fascinating”, “,”, “isn’t”, “it”, “?”]

-

-

Part-of-Speech (POS) Tagging: Giving Words a Role: Each word plays a role in a sentence. POS tagging assigns a grammatical tag (noun, verb, adjective, etc.) to each token. Think of it like casting actors in a play, where each actor has a specific role to fulfill.

-

Input: “The quick brown fox jumps over the lazy dog.”

-

Output: [(“The”, “DT”), (“quick”, “JJ”), (“brown”, “JJ”), (“fox”, “NN”), (“jumps”, “VBZ”), (“over”, “IN”), (“the”, “DT”), (“lazy”, “JJ”), (“dog”, “NN”), (“.”, “.”)]

-

-

Named Entity Recognition (NER): Spotting the Key Players: NER identifies and categorizes important entities in text, like people, organizations, locations, dates, and more. It’s like having a detective who can pick out the key individuals and places in a news article.

-

Input: “Elon Musk announced that Tesla will open a new factory in Berlin in 2024.”

-

Output: [(“Elon Musk”, “PERSON”), (“Tesla”, “ORG”), (“Berlin”, “GPE”), (“2024”, “DATE”)]

-

-

Lemmatization and Stemming: Finding the Root: Words can appear in different forms (e.g., “running,” “ran,” “runs”). Lemmatization and stemming reduce words to their base form, but with different approaches. Lemmatization is like going back to the dictionary definition (lemma), while stemming is like chopping off endings.

-

Input: “The runner was running quickly.”

-

Lemmatization Output: “The runner was run quickly.” (Note: “was” might also be lemmatized to “be”)

-

Stemming Output: “The runner was run quickli.” (Stemming can be less accurate.)

-

-

Parsing (Syntactic Analysis): Unraveling the Sentence Structure: Parsing analyzes the grammatical structure of a sentence, revealing how words relate to each other. Think of it like diagramming sentences in English class, but on steroids.

-

Semantic Analysis: Delving into Meaning: This goes beyond just identifying words and their roles; it’s about understanding the meaning behind the words. This can involve:

-

Word Sense Disambiguation: Figuring out which meaning of a word is intended in a given context (e.g., “The bank is closed” vs. “The river bank is eroding”).

-

Relationship Extraction: Identifying relationships between entities (e.g., “Apple was founded by Steve Jobs” – identifying the relationship “founded by”).

-

Sentiment Analysis: Determining the emotional tone (positive, negative, neutral) expressed in a text.

-

-

Topic Modeling: Discovering the main themes discussed in a collection of documents.

-

Text Summarization: Condensing a long piece of text into a shorter, more digestible version.

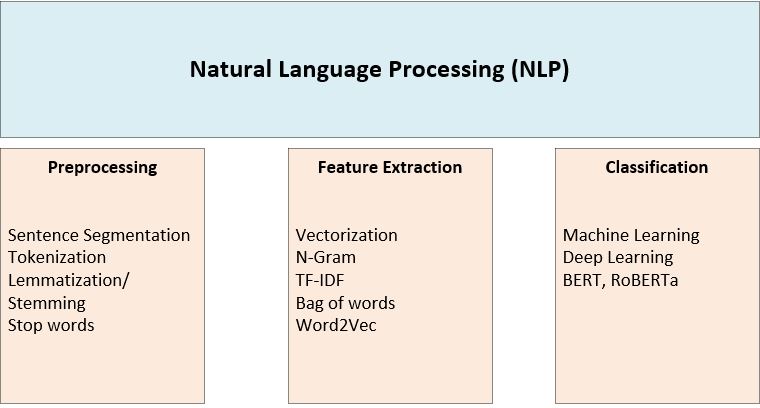

2. The NLP Workflow: From Raw Text to Insights

The specific steps in an NLP project can vary, but a common workflow looks something like this:

-

Data Acquisition: Gathering the text data you need.

-

Preprocessing: Cleaning and preparing the data. This often involves removing irrelevant characters, standardizing text formatting, and performing tokenization, stop word removal, and stemming/lemmatization.

-

Feature Engineering: Transforming the text into numerical representations that machine learning models can understand. Popular methods include:

-

Bag-of-Words (BoW): A simple representation that counts the frequency of each word in a document.

-

TF-IDF: Weights words based on their importance within a document and across the entire dataset.

-

Word Embeddings (Word2Vec, GloVe, FastText): Creates dense vector representations of words, capturing semantic relationships (words with similar meanings are closer together in the vector space).

-

-

Model Selection and Training: Choosing an appropriate machine learning or deep learning model and training it on your data.

-

Evaluation: Assessing the performance of your model on a separate test dataset.

-

Deployment and Monitoring: Putting your model into action and continuously monitoring its performance.

3. NLP Approaches: A Toolkit of Techniques

-

Rule-Based Systems: Rely on explicitly defined rules and grammars. They are easy to understand but struggle with the complexities of real-world language. Think of it like programming a very specific set of instructions.

-

Statistical NLP (Machine Learning): Use statistical models trained on data to learn patterns. These are more robust but require a lot of training data. Examples include Naive Bayes, Support Vector Machines (SVMs), and Hidden Markov Models (HMMs).

-

Deep Learning: Employs neural networks to learn complex language representations. Deep learning models, like Transformers (BERT, GPT), have revolutionized NLP, achieving state-of-the-art results on various tasks.

4. Essential Tools and Libraries

-

NLTK (Natural Language Toolkit): A great library for learning and experimenting with NLP.

-

spaCy: A fast and efficient library ideal for production environments.

-

Transformers (Hugging Face): Provides access to pre-trained transformer models.

-

Gensim: Excellent for topic modeling and document similarity.

-

Scikit-learn: A versatile machine learning library for a wide range of NLP tasks.

-

TensorFlow and PyTorch: Powerful deep learning frameworks.

5. NLP in Action: Real-World Applications

-

Chatbots and Virtual Assistants: Providing conversational interfaces.

-

Machine Translation: Breaking down language barriers.

-

Text Summarization: Condensing information overload.

-

Sentiment Analysis: Understanding public opinion.

-

Spam Detection: Protecting inboxes.

-

Question Answering: Accessing information more naturally.

-

Content Generation: Writing articles, poems, and more.

6. The Ongoing Challenges

NLP is a rapidly evolving field, and challenges remain:

-

Ambiguity: Words and sentences can have multiple interpretations.

-

Context Dependence: Meaning is heavily influenced by context.

-

Sarcasm and Irony: Difficult for computers to detect.

-

Idioms and Metaphors: Require a deeper understanding of language.

-

Domain Adaptation: Models trained on one area may not work well in another.

-

Bias: Training data can contain biases that are reflected in model outputs.

In conclusion, NLP is a dynamic and powerful field that is transforming how we interact with technology. From simple tasks to complex applications, NLP is bringing us closer to a world where machines can truly understand and communicate with us in our own language. And as AI continues to advance, the possibilities for NLP are virtually limitless.