We’re on the cusp of a new era in artificial intelligence, one where AI assistants are no longer limited by the static knowledge they were initially trained on. Imagine an AI that can not only understand your requests but also dynamically access, process, and integrate the latest information to provide truly insightful and relevant answers. That’s the transformative promise of Retrieval-Augmented Generation (RAG), an architectural approach that’s rapidly redefining the capabilities of generative AI.

So, what distinguishes RAG from the large language models (LLMs) that have already captured our imagination? Traditional LLMs rely almost exclusively on the vast datasets they were trained on, effectively encapsulating a snapshot of knowledge frozen in time. RAG, on the other hand, breaks free from these constraints by providing LLMs with a “live” connection to an external and constantly evolving knowledge base. This external resource can encompass a vast array of information sources, including breaking news feeds, scientific research papers, proprietary company documents, and even real-time data streams. In essence, RAG empowers LLMs to become dynamic, ever-learning entities, capable of delivering responses that are not only accurate and informative but also deeply contextualized and reflective of the latest developments.

Deconstructing RAG: The Synergy of Retrieval and Generation

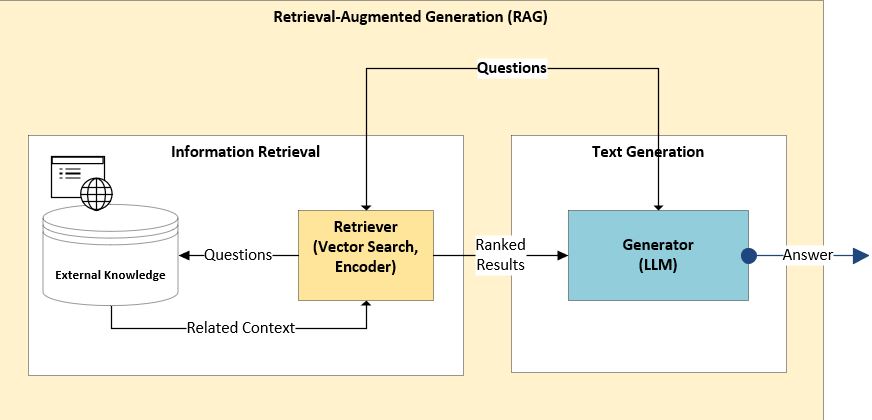

The power of RAG stems from the seamless integration of two distinct, yet complementary, components: the Retriever and the Generator.

- The Retriever: The Intelligent Knowledge Navigator: The Retriever serves as the entry point to the external knowledge base, acting as a highly sophisticated search and filtering mechanism. It analyzes the user’s query, not just by identifying keywords, but by understanding the underlying semantic meaning and intent. This allows the Retriever to go beyond simple keyword matching and identify the most relevant documents, passages, or data points that address the user’s request. Imagine a team of expert researchers who can rapidly sift through terabytes of information to pinpoint the exact pieces of evidence needed to answer a specific question. Advanced techniques like semantic similarity search, vector databases, and knowledge graph traversal are often employed to ensure efficient and accurate retrieval.

- The Generator: The Eloquent Synthesizer of Knowledge: Once the Retriever has identified the relevant information, the Generator takes center stage. This component is typically a powerful pre-trained language model (LLM), such as GPT-3.5, GPT-4, or PaLM 2, fine-tuned to generate human-quality text in a variety of formats. The Generator receives the user’s original query, along with the retrieved information, and leverages its language processing capabilities to craft a coherent, informative, and contextually appropriate response. It’s like a skilled writer who can synthesize diverse sources of information into a compelling and insightful narrative.

A Step-by-Step Look at the RAG Workflow:

The User’s Inquiry: The process begins with a user posing a question or request to the AI assistant.

Semantic Understanding: The RAG system analyzes the user’s query to understand its intent and identify the key concepts.

External Knowledge Retrieval: The Retriever component then initiates a search across the external knowledge base, leveraging sophisticated semantic retrieval techniques to identify relevant documents, passages, or data points.

Contextual Augmentation: The retrieved information is carefully integrated with the original query, creating a richer context for the LLM to work with. This augmentation step is crucial for ensuring that the Generator has access to all the necessary information to craft an accurate and informative response.

Response Generation: Finally, the Generator component leverages its language processing capabilities to produce a well-structured and comprehensive response, drawing upon both its internal knowledge and the retrieved external information.

Source Attribution (Optional): In some implementations, the RAG system may also provide citations or links to the original sources used to generate the response, allowing users to verify the information and understand the reasoning behind the AI’s conclusions.

The Compelling Advantages of RAG:

RAG offers a multitude of benefits compared to traditional LLMs, making it a game-changer for a wide range of applications:

- Unparalleled Accuracy and Currency: By accessing real-time data and up-to-date information sources, RAG significantly reduces the risk of generating outdated, inaccurate, or irrelevant responses. Imagine a financial advisor AI that can provide investment recommendations based on the latest market trends and economic indicators.

- Enhanced Contextual Awareness: RAG enables LLMs to understand the nuances of a user’s request and provide more relevant and helpful responses by incorporating external knowledge. For example, a RAG-powered travel assistant could analyze real-time flight data, weather forecasts, and local events to provide personalized travel recommendations.

- Adaptability and Domain Expertise: RAG’s ability to access and integrate external knowledge makes it adaptable to a wide range of domains and tasks. This allows organizations to build AI applications that are tailored to their specific needs and can leverage the latest information in their respective fields. A RAG-enabled medical diagnosis tool could analyze the latest medical research and patient records to provide more accurate and personalized diagnoses.

- Transparency and Explainability: By providing access to the sources used to generate responses, RAG enhances the transparency and explainability of AI-powered applications. This is particularly important in sensitive domains like healthcare and finance, where users need to understand the reasoning behind the AI’s conclusions.

- Reduced Hallucinations: By grounding its responses in external knowledge, RAG can help mitigate the problem of “hallucinations,” where LLMs generate incorrect or nonsensical information.

Beyond the Horizon: The Future of RAG and Generative AI

RAG is not merely an incremental improvement in generative AI; it represents a fundamental shift in the way we build and deploy AI applications. As RAG technology continues to evolve, we can expect to see even more sophisticated and innovative applications emerge.

- Personalized Learning: RAG could revolutionize education by providing personalized learning experiences that adapt to each student’s individual needs and learning style. AI tutors could access a vast library of educational resources and provide customized feedback based on each student’s progress.

- Scientific Discovery: RAG can accelerate scientific discovery by enabling researchers to quickly analyze vast amounts of scientific data and identify patterns and insights that would otherwise be impossible to find. AI-powered research assistants could help scientists design experiments, analyze data, and write research papers.

- Customer Service Transformation: RAG-powered chatbots can provide instant and accurate answers to customer inquiries, freeing up human agents to focus on more complex issues. Chatbots could access product documentation, FAQs, and customer support tickets to provide personalized support experiences.

- Content Creation Revolution: RAG will empower content creators with the ability to generate high-quality and engaging content quickly and efficiently. AI-powered writing tools could help writers research topics, generate outlines, and write drafts.

The potential of RAG is vast and far-reaching. As we continue to refine and develop this technology, we can expect to see even more transformative applications emerge, ultimately bridging the gap between human knowledge and artificial intelligence. The key to success lies not just in the technology itself, but in how we ethically and responsibly deploy it to augment human capabilities and create a more informed and empowered world. Watch for advancements in vector database efficiency, the development of more robust “retrieval” algorithms that can handle noisy or ambiguous queries, and increasing focus on the ethical considerations of using external knowledge sources. The future of generative AI is being written now, and RAG is a central chapter in that story.