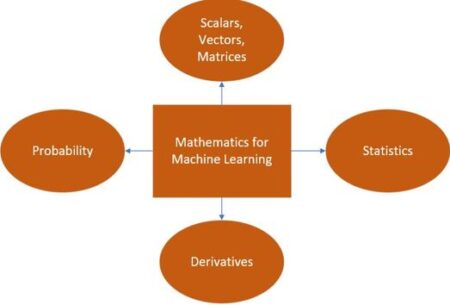

Machine learning relies heavily on several core mathematical concepts. A solid understanding of these concepts is crucial for building, understanding, and improving machine learning models. This post will cover vectors, matrices, derivatives, probability, and basic statistics, illustrating their relevance with practical examples.

1. Vectors

- Definition: Vectors are ordered collections of numbers, often arranged as columns or rows. Think of them as a way to represent a point in multi-dimensional space.

- Applications in Machine Learning:

- Feature Representation: Each data point in a dataset can be represented as a feature vector, where each element corresponds to a specific feature or attribute.

- Example: In image classification, an image could be represented by a vector containing pixel intensities, or the results of more complex image analysis. A vector representing a cat image might look like this: [100, 23, 201, 78, …] where each number is the value of a particular feature.

- Example: In a housing price prediction model, a house could be described by features like number of bedrooms, square footage, number of bathrooms, and presence of a garage, represented by a vector like [3, 1500, 2, 1].

- Model Parameters: The learnable parameters of many models, such as linear regression models and neural networks, are stored and manipulated as vectors. This includes weights and biases.

- Example: The weights learned by a linear regression model for our house example could be stored in a vector like [0.5, 0.2, 0.8, -0.1].

- Embeddings: Vectors are used to represent categorical data, such as words, in a continuous, dense space. This is particularly useful in natural language processing.

- Example: In text classification, words can be represented by word embedding vectors, for instance, [0.2, 0.7, -0.1, 0.4, …] These embeddings capture semantic relationships between words.

- Feature Representation: Each data point in a dataset can be represented as a feature vector, where each element corresponds to a specific feature or attribute.

- Importance: Vectors provide a fundamental and efficient way to represent and process data. They are the basis for more complex matrix operations.

2. Matrices

- Definition: Matrices are rectangular arrays of numbers organized in rows and columns.

- Applications in Machine Learning:

- Datasets: A dataset can be structured as a matrix, where each row represents a data point (feature vector) and each column represents a particular feature.

- Example: If you have a dataset of 100 houses, each described by 4 features, the dataset can be represented as a 100×4 matrix.

- Linear Transformations: Matrices are used to represent linear transformations of data, particularly within linear models and neural networks.

- Example: A layer in a neural network uses a weight matrix to transform the input vector into a different representation space.

- Covariance: Covariance matrices capture how different features in a dataset vary in relation to one another.

- Image Processing: Images can be represented as matrices, with pixel intensities forming the elements. Color images can be represented as a stack of matrices, one for each color channel (e.g., Red, Green, Blue).

- Graph Representation: Adjacency matrices are used to represent the structure of graphs in graph-based machine learning.

- Datasets: A dataset can be structured as a matrix, where each row represents a data point (feature vector) and each column represents a particular feature.

- Importance: Matrices enable efficient processing of large datasets and allow for expressing complex linear operations, such as transformations and projections.

3. Derivatives

- Definition: A derivative measures the instantaneous rate of change of a function at a given point.

- Applications in Machine Learning:

- Optimization: Derivatives are fundamental to optimization algorithms like gradient descent, which is used to find the optimal parameters for a machine learning model. These parameters minimize a loss function (a measure of how poorly the model is performing). The derivative (gradient) points in the direction of the steepest ascent of the loss function, so moving in the opposite direction allows us to minimize the loss.

- Example: In linear regression, the derivative of the mean squared error loss function with respect to the model’s weights provides the direction for adjusting those weights to improve model performance.

- Example: Neural networks use backpropagation, an algorithm relying on derivatives, to calculate how much each weight and bias contributes to the error, and adjusts them accordingly.

- Feature Importance: Derivatives can be used to assess the sensitivity of a model’s output to changes in a specific feature, thereby informing feature selection.

- Optimization: Derivatives are fundamental to optimization algorithms like gradient descent, which is used to find the optimal parameters for a machine learning model. These parameters minimize a loss function (a measure of how poorly the model is performing). The derivative (gradient) points in the direction of the steepest ascent of the loss function, so moving in the opposite direction allows us to minimize the loss.

- Importance: Derivatives enable models to learn from data by iteratively adjusting their parameters to minimize prediction errors.

4. Probability

- Definition: Probability quantifies the likelihood of an event occurring.

- Applications in Machine Learning:

- Model Building:

- Probabilistic Models: Models like Naive Bayes and Bayesian networks rely directly on probability distributions to model data and make predictions.

- Loss Functions: Certain loss functions, such as cross-entropy, are derived from concepts in probability theory.

- Uncertainty: Probability distributions are useful for quantifying the uncertainty or confidence of a model’s predictions.

- Data Analysis:

- Data Modeling: Probability distributions can be used to describe the distribution of data.

- Feature Engineering: Features can be created based on the estimated probabilities of certain events.

- Noise Handling: Probabilistic methods are well-suited to deal with noisy or uncertain data.

- Model Evaluation: Evaluation metrics such as AUC (Area Under the Curve), precision, recall, and F1-score are rooted in probability.

- Specific Techniques:

- Bayesian learning uses prior beliefs about model parameters.

- Sampling techniques such as Markov Chain Monte Carlo (MCMC) are used in a variety of ML applications.

- Model Building:

- Importance: Probability provides a framework for reasoning about uncertainty in data and models, and for building models that are based on probabilistic principles.

5. Basic Statistics

- Definition: Statistics is the science of collecting, analyzing, interpreting, and presenting data.

- Applications in Machine Learning:

- Data Understanding:

- Descriptive Statistics: Measures like mean, median, mode, and standard deviation are used to summarize and understand the key properties of a dataset’s distribution.

- Visualization: Histograms, scatter plots, and other visualizations are used to identify patterns and outliers in the data.

- Data Preprocessing:

- Normalization and Standardization: Statistical techniques are used to scale and center features, improving model performance.

- Outlier Detection: Statistical methods can be used to identify and handle unusual data points.

- Feature Selection: Statistical tests are used to identify the most relevant features for a model.

- Model Evaluation:

- Evaluation Metrics: Metrics like accuracy, precision, recall, and F1-score are used to evaluate the performance of machine learning models.

- Hypothesis Testing: Statistical hypothesis testing can be used to determine if a model’s performance is statistically significant.

- Cross-validation: Statistical techniques are used to estimate a model’s performance on unseen data.

- Bias-Variance Tradeoff: Statistical concepts are used to understand the sources of error in a model.

- Handling Imbalanced Data: Resampling and other statistical techniques are used to deal with rare events in classification tasks.

- Data Understanding:

- Importance: Statistics provides the tools for analyzing and understanding data, preparing it for use in machine learning models, and rigorously evaluating the performance of those models.

Summary

- Vectors are used to represent data, model parameters, and embeddings.

- Matrices organize datasets and allow for linear transformations.

- Derivatives enable the optimization process by guiding parameter adjustments.

- Probability provides a way to model and reason about uncertainty.

- Statistics helps with data understanding, preprocessing, and model evaluation.

These mathematical concepts are intertwined and form the bedrock of many machine learning models. A firm grasp of these concepts is essential for anyone seeking to become a proficient machine learning practitioner.