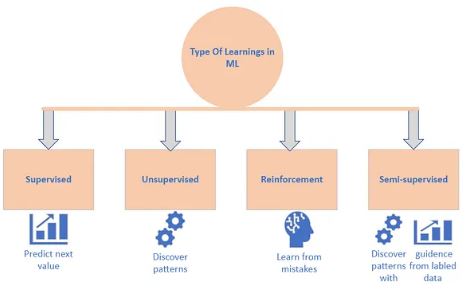

Machine Learning (ML) has become a critical enabler of modern technological advancements, empowering computers to learn from data, identify intricate patterns, and make intelligent, data-driven decisions. At the heart of this transformative field lie various learning techniques, each designed to equip machines with the ability to acquire knowledge, refine their performance, and solve complex problems. This article offers a comprehensive exploration of the different types of learning in Machine Learning, illuminating their unique characteristics, diverse applications, and potential benefits. We’ll go beyond the basics to provide a deeper understanding of each learning paradigm and how they contribute to the ever-evolving world of AI.

1. Supervised Learning: Learning from Labeled Examples

Supervised Learning stands as one of the most prevalent and widely utilized types of ML. In this paradigm, a model is trained using a dataset comprised of labeled data. This signifies that each input feature is associated with a corresponding output label, effectively providing the model with explicit “ground truth” information. The model’s objective is to learn the underlying mapping function that accurately predicts the output label based on the input features.

Think of it like teaching a child to identify different animals by showing them pictures of each animal along with its name. The model learns to associate the visual features of the animal (e.g., stripes for a zebra, a long neck for a giraffe) with the correct label (zebra, giraffe).

Supervised Learning algorithms excel in a wide array of tasks, including:

- Classification: Categorizing data into distinct classes. Examples include:

- Spam detection: Identifying whether an email is spam or not.

- Image recognition: Identifying objects in an image (e.g., cat, dog, car).

- Medical diagnosis: Classifying a tumor as benign or malignant based on medical images and patient data.

- Regression: Predicting a continuous numerical value. Examples include:

- Predicting housing prices: Estimating the price of a house based on features like location, size, and number of bedrooms.

- Forecasting sales: Predicting future sales based on historical data and market trends.

- Estimating stock prices: Predicting the future price of a stock based on historical data and market indicators.

Common Supervised Learning algorithms include:

- Linear Regression

- Logistic Regression

- Support Vector Machines (SVMs)

- Decision Trees

- Random Forests

- Neural Networks

2. Unsupervised Learning: Discovering Hidden Structures in Unlabeled Data

In contrast to Supervised Learning, Unsupervised Learning deals with unlabeled data. Here, the goal is to uncover underlying patterns, structures, or relationships within the data without explicit guidance or pre-defined output labels. The algorithms analyze the data to identify similarities, group similar instances into clusters, or reduce the dimensionality of the data while preserving pertinent information.

Imagine giving a child a box of LEGO bricks of various shapes and colors and asking them to organize them in a meaningful way. Without any instructions, the child might group the bricks by color, shape, or size, revealing their inherent understanding of these features.

Key applications of Unsupervised Learning include:

- Clustering: Grouping data points based on similarity. Examples include:

- Customer segmentation: Grouping customers based on their purchasing behavior and demographics to tailor marketing campaigns.

- Document clustering: Grouping similar documents together for topic analysis and information retrieval.

- Anomaly detection: Identifying unusual data points that deviate significantly from the norm, potentially indicating fraud or errors.

- Dimensionality Reduction: Reducing the number of features (variables) in a dataset while preserving its essential information. This can simplify the data, reduce computational complexity, and improve the performance of other machine learning algorithms. Examples include:

- Principal Component Analysis (PCA)

- t-distributed Stochastic Neighbor Embedding (t-SNE)

- Association Rule Learning: Discovering relationships between items in a dataset. A classic example is market basket analysis, which identifies products that are frequently purchased together.

Unsupervised Learning is particularly useful in:

- Customer segmentation

- Anomaly detection

- Recommendation systems

- Market basket analysis

- Data preprocessing for other machine learning tasks

Common Unsupervised Learning algorithms include:

- K-Means Clustering

- Hierarchical Clustering

- Principal Component Analysis (PCA)

- t-distributed Stochastic Neighbor Embedding (t-SNE)

- Association Rule Mining (e.g., Apriori algorithm)

3. Reinforcement Learning: Learning Through Trial and Error

Reinforcement Learning (RL) takes a distinct approach, centering around an agent that learns to interact with an environment through a process of trial and error. The agent’s goal is to maximize its cumulative reward over time. The agent takes actions, observes the resulting state of the environment, and receives feedback in the form of rewards or penalties. Based on this feedback, the agent adjusts its strategy (or policy) to make better decisions moving forward.

Think of training a dog. You give the dog a command (action), and if the dog performs the action correctly, you reward it with a treat. If the dog performs the action incorrectly, you might give it a verbal correction. The dog learns to associate certain actions with positive rewards and others with negative consequences, eventually learning to perform the desired behavior consistently.

Reinforcement Learning has achieved notable success in complex tasks such as:

- Game-playing: Mastering complex games like Go (AlphaGo), chess, and video games.

- Robotics: Controlling robots to perform tasks such as navigation, grasping, and manipulation.

- Autonomous systems: Developing self-driving cars, optimizing traffic flow, and managing power grids.

- Resource management: Optimizing resource allocation in data centers or supply chains.

Key concepts in Reinforcement Learning include:

- Agent: The learner that interacts with the environment.

- Environment: The world with which the agent interacts.

- State: A representation of the environment at a given point in time.

- Action: A decision made by the agent that affects the environment.

- Reward: A signal indicating the desirability of an action or state.

- Policy: A strategy that maps states to actions.

Common Reinforcement Learning algorithms include:

- Q-Learning

- Deep Q-Networks (DQN)

- Policy Gradient Methods (e.g., REINFORCE, Actor-Critic)

4. Semi-Supervised Learning: Leveraging Both Labeled and Unlabeled Data

Semi-Supervised Learning bridges the gap between Supervised and Unsupervised Learning by leveraging a combination of a small amount of labeled data alongside a larger pool of unlabeled data. The labeled data provides explicit guidance to the model, while the unlabeled data helps to uncover hidden structures, improve generalization, and mitigate the need for extensive labeled datasets.

Imagine teaching a child a new language. You might start by teaching them a few basic words and phrases (labeled data). Then, you expose them to a lot of conversations and texts in that language (unlabeled data). The child can then use their limited knowledge of the labeled words and phrases to infer the meaning of new words and phrases from the context of the unlabeled data.

Semi-Supervised Learning is particularly beneficial when:

- Obtaining labeled data is expensive, time-consuming, or requires specialized expertise.

- A large amount of unlabeled data is readily available.

Applications of Semi-Supervised Learning include:

- Speech recognition: Improving speech recognition accuracy by leveraging both labeled and unlabeled speech data.

- Sentiment analysis: Analyzing the sentiment (positive, negative, or neutral) of text by using a small set of labeled reviews and a larger set of unlabeled reviews.

- Fraud detection: Identifying fraudulent transactions by combining labeled data (known fraudulent transactions) with unlabeled data (normal transactions).

- Medical image analysis: Analyzing medical images to diagnose diseases by using a small set of labeled images and a larger set of unlabeled images.

Common Semi-Supervised Learning algorithms include:

- Self-Training

- Co-Training

- Label Propagation

5. Transfer Learning: Applying Knowledge Across Domains

Transfer Learning centers on harnessing knowledge acquired from addressing one task or domain and applying it to a distinct, yet related, task or domain. Rather than training a model from the ground up for each new task, Transfer Learning initializes the model with pre-trained weights learned from a source task and then fine-tunes the model on the target task.

Consider a scenario where you’ve learned to ride a bicycle. This knowledge can then be “transferred” to learning to ride a motorcycle. While there are differences between the two (e.g., the presence of an engine, different balance requirements), the core skills of balancing, steering, and coordinating your movements are transferable.

Transfer Learning is particularly useful when:

- There is limited labeled data available for the target task.

- Training resources are constrained.

- The source and target tasks are related.

Transfer Learning has proven exceptionally effective in diverse applications, including:

- Image classification: Using pre-trained image classification models (e.g., ImageNet) to enhance the accuracy of image classification tasks on new datasets.

- Natural language processing: Using pre-trained language models (e.g., BERT, GPT) to improve the performance of tasks such as text classification, machine translation, and question answering.

- Computer vision: Applying knowledge gained from object detection in one domain (e.g., autonomous driving) to object detection in another domain (e.g., medical image analysis).

Common Transfer Learning techniques include:

- Fine-tuning pre-trained models

- Feature extraction using pre-trained models

Conclusion: Choosing the Right Learning Paradigm for Success

Machine Learning encompasses a rich and varied assortment of learning techniques, each tailored to tackle specific challenges and unlock novel opportunities. Supervised Learning, Unsupervised Learning, Reinforcement Learning, Semi-Supervised Learning, and Transfer Learning represent fundamental paradigms that propel the advancement of intelligent systems across a multitude of fields. By gaining a thorough understanding of the characteristics, applications, and trade-offs associated with each learning type, practitioners can strategically select the most fitting approach to address real-world problems, optimize model performance, and realize the complete transformative potential of Machine Learning. As the field continues its evolution, a comprehensive grasp of these learning paradigms will be vital for navigating the continuously evolving landscape of AI and constructing truly intelligent systems.