In today’s data-driven environment, machine learning (ML) has emerged as a transformative tool, empowering businesses and individuals to glean actionable intelligence, streamline workflows, and make informed decisions. From personalized recommendations on your favorite streaming platform to fraud detection systems safeguarding your financial transactions, ML is quietly revolutionizing our lives. While the field may seem complex, building your own ML model is more accessible than ever. This comprehensive guide will walk you through the essential steps, equipping you with the knowledge and tools to embark on your ML journey.

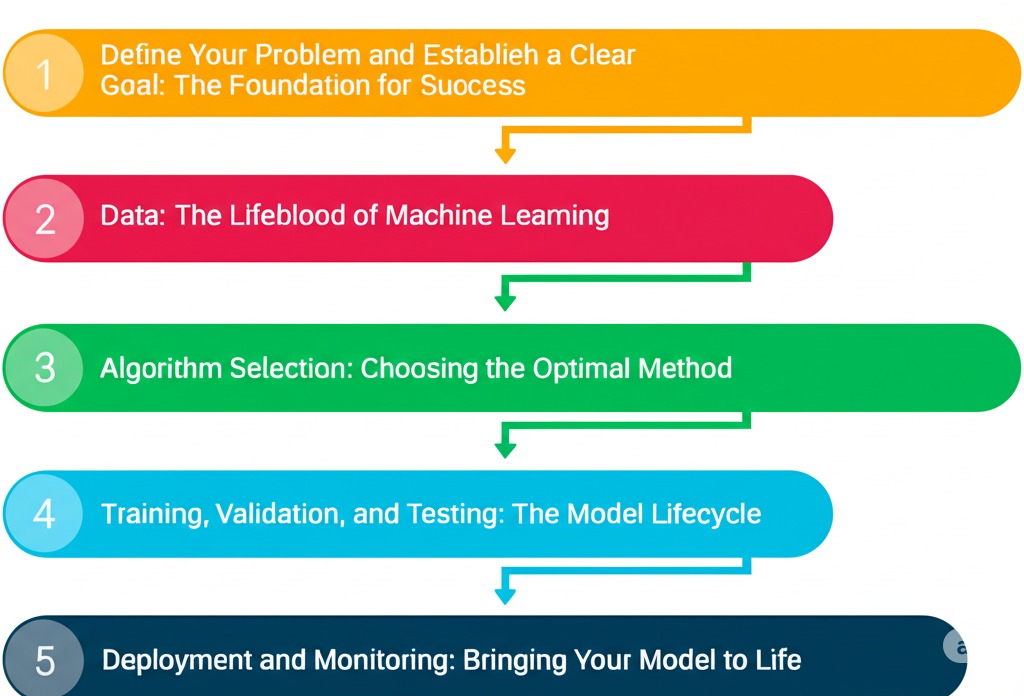

Below diagram explain the steps involved in building the Machine Learning model:

1. Define Your Problem and Establish a Clear Goal: The Foundation for Success

Before diving into the technical intricacies, it’s crucial to clearly articulate the problem you’re trying to solve. A well-defined problem serves as your compass, guiding your decisions throughout the entire ML model building process. Ask yourself:

- What specific question are we trying to address?

- What predictions are we trying to achieve?

- What practical insights do we hope to uncover?

Example: Instead of broadly aiming to “enhance user retention strategies,” define a more specific problem: “We want to predict customer churn for our subscription service. By identifying customers at high risk of canceling, we can proactively offer personalized incentives to encourage them to stay.”

Once you have a clear problem statement, establish a measurable goal that will allow you to evaluate your model’s success. Consider:

- What target accuracy rate is required to make the model useful?

- What level of gain relative to the current method is needed to warrant using this model (if any)?

- What quantifiable metrics (KPIs) will be tracked to to show model benefits?

A concrete goal provides a benchmark for assessing your model’s effectiveness and justifying its development.

2. Data: The Lifeblood of Machine Learning – Gathering, Cleaning, and Preparing

Data functions as the engine that powers machine learning algorithms. The quality, quantity, and relevance of your data directly impact the performance and reliability of your model. This stage involves:

- Data Acquisition: Identifying and gathering the relevant data sources needed to address your problem. This could include internal databases, spreadsheets, public APIs, web scraping techniques, third-party data vendors, or even sensor data.

- Data Cleaning (The Essential Prerequisite): Real-world data is rarely pristine. It’s often plagued by inconsistencies, errors, missing values, and noise. Cleaning your data is a critical, though often time-consuming, step to build trustworthy models. Key techniques include:

- Handling Missing Values: Impute missing data using statistical methods (mean, median, mode, or regression-based imputation) or advanced methodologies like K-Nearest Neighbors imputation. Consider the context of your data when choosing an imputation strategy to avoid skewing results. You might also simply remove rows or columns with too many missing values, but be aware of the potential for introducing bias into your data.

- Removing Duplicates: Eliminate duplicate entries to prevent skewing the model’s learning process.

- Error Correction: Identify and correct inaccuracies, inconsistencies, and outliers in your data. This could involve manual inspection, domain expertise, or automated data validation rules.

- Exploratory Data Analysis (EDA): Gaining Data Wisdom: EDA involves using statistical and visual techniques to understand the underlying structure of your data. This includes:

- Descriptive Statistics: Calculating summary statistics (mean, median, standard deviation, percentiles) to understand the distribution of your features.

- Data Visualization: Creating plots and charts (histograms, scatter plots, box plots) to identify patterns, relationships, and outliers. Libraries like Matplotlib and Seaborn in Python are invaluable tools for EDA.

- Feature Engineering: Refining Data Attributes: Feature engineering is the art of transforming raw data into meaningful features that your ML model can effectively learn from. This often requires domain expertise and a deep understanding of your data.

- Scaling and Normalization: Standardize the range of your features to prevent features with larger values from dominating the model, especially in algorithms that rely on distance calculations. Techniques include min-max scaling, standardization (Z-score normalization), and robust scaling.

- Encoding Categorical Variables: Most machine learning models are optimized for numerical inputs, Convert categorical variables (e.g., “city,” “product category”) into numerical representations using techniques like one-hot encoding, label encoding, or ordinal encoding.

- Creating New Features: Combine existing features or transform them to create new features that capture underlying relationships or patterns. For example, you might create an “interaction term” by multiplying two features together.

- Handling Text Data: If your data includes text, use techniques like tokenization, stemming, lemmatization, and TF-IDF (Term Frequency-Inverse Document Frequency) to convert it into numerical representations.

3. Algorithm Selection: Choosing the Optimal Method

Selecting the appropriate machine learning algorithm is critical for achieving your desired outcome. The choice depends on the type of problem you’re solving (regression, classification, clustering, etc.) and the characteristics of your data (size, complexity, data types).

- Supervised Learning: You have labeled data, meaning you have historical context to train on.

- Regression: Predicting a continuous numerical value (e.g., predicting house prices, stock values, or customer lifetime value). Common algorithms include:

- Linear Regression: A simple and interpretable algorithm for modeling linear relationships.

- Polynomial Regression: Captures non-linear relationships by fitting a polynomial equation to the data.

- Support Vector Regression (SVR): Effective for handling complex relationships and high-dimensional data.

- Decision Tree Regression: Creates a tree-like structure to predict values based on decision rules.

- Random Forest Regression: An ensemble method that combines multiple decision trees to improve accuracy and reduce overfitting.

- Classification: Predicting a categorical label (e.g., classifying emails as spam or not spam, identifying fraudulent transactions, or predicting customer churn). Popular algorithms include:

- Logistic Regression: A linear model for binary classification problems.

- Support Vector Machines (SVM): Effective for handling high-dimensional data and non-linear decision boundaries.

- Decision Trees: Interpretable and versatile algorithms for classification and regression.

- Random Forests: An ensemble method that combines multiple decision trees to improve accuracy and robustness.

- Naive Bayes: A probabilistic classifier based on Bayes’ theorem, often used for text classification.

- K-Nearest Neighbors (KNN): Classifies data points based on the majority class of their nearest neighbors.

- Regression: Predicting a continuous numerical value (e.g., predicting house prices, stock values, or customer lifetime value). Common algorithms include:

- Unsupervised Learning: You have unlabeled data, meaning you want to discover hidden patterns or structures with less historical context.

- Clustering: Grouping similar data points together based on their characteristics (e.g., customer segmentation, anomaly detection). Common algorithms include:

- K-Means Clustering: Partitions data into K clusters, where each data point belongs to the cluster with the nearest mean.

- Hierarchical Clustering: Creates a hierarchy of clusters, allowing you to explore different levels of granularity.

- DBSCAN (Density-Based Spatial Clustering of Applications with Noise): Identifies clusters based on density and can identify outliers.

- Dimensionality Reduction: Reducing the number of features while preserving important information (e.g., principal component analysis – PCA, t-distributed stochastic neighbor embedding – t-SNE). These are often used for visualization or to reduce the computational cost of training models.

- Clustering: Grouping similar data points together based on their characteristics (e.g., customer segmentation, anomaly detection). Common algorithms include:

4. Training, Validation, and Testing: The Model Lifecycle

This is where you actually build and refine your machine learning model.

- Data Splitting: Divide your data into three mutually exclusive sets:

- Training Set: Used to train the machine learning model. The algorithm learns patterns and relationships from this data.

- Validation Set: Used to fine-tune the model’s hyperparameters. Hyperparameters are settings that control the learning process itself (e.g., the learning rate in a neural network or the number of trees in a random forest). The validation set helps you avoid overfitting, which is when the model learns the training data too well and performs poorly on unseen data.

- Test Set: Used to evaluate the final, trained model’s performance on completely unseen data. This provides an unbiased estimate of how well the model will generalize to new data in the real world. It is crucial to only use it ONCE, at the very end.

- Model Training: Feed the training data to your chosen algorithm. The algorithm will adjust its internal parameters to minimize the error between its predictions and the actual target values.

- Hyperparameter Tuning: Most machine learning algorithms have hyperparameters that significantly influence their performance. Tuning these hyperparameters involves experimenting with different values using the validation set. Common techniques include:

- Grid Search: Systematically exploring all possible combinations of hyperparameter values.

- Randomized Search: Randomly sampling hyperparameter values from a specified distribution to find optimal hyperparameters.

- Bayesian Optimization: A more sophisticated approach that uses a probabilistic model to guide the search for optimal hyperparameters.

- Model Evaluation: Evaluate your model’s performance on the test set using appropriate metrics. The choice of metrics depends on the type of problem you’re solving:

- Regression: Mean Squared Error (MSE), Root Mean Squared Error (RMSE), R-squared (coefficient of determination), Mean Absolute Error (MAE).

- Classification: Accuracy, Precision, Recall, F1-score, Area Under the Receiver Operating Characteristic Curve (AUC-ROC), Confusion Matrix.

5. Deployment and Monitoring: Bringing Your Model to Life

Once you’re satisfied with your model’s performance, it’s time to deploy it into a production environment where it can be used to make real-world predictions and even real-time decision-making.

- Deployment: Integrate your model into your application, website, or system. This may involve:

- Creating an API endpoint that accepts input data and returns predictions.

- Embedding the model directly into your application code.

- Using a cloud-based machine learning platform to host and serve your model.

- Consider using containerization technologies like Docker and orchestration tools like Kubernetes to simplify deployment and scaling.

- Monitoring: Continuous monitoring is essential to ensure that your model maintains its performance over time. Track key metrics, such as:

- Prediction Accuracy: Monitor the model’s accuracy on new data.

- Latency: Measure the time it takes to generate predictions.

- Data Drift: Detect changes in the distribution of the input data, which can degrade model performance.

- Throughput: Measure the number of predictions the model can handle per unit of time.

- Security Audits: Monitoring of security protocols and best-practices.

If the model’s performance degrades significantly, you may need to retrain it with new data or adjust its hyperparameters. Data drift detection and automated retraining pipelines are key to maintain a successful model in production.

import pandas as pd

from sklearn.model_selection import train_test_split

from sklearn.linear_model import LogisticRegression

from sklearn.metrics import accuracy_score, classification_report

import pickle # For model serialization

# 1. Load your data (replace with your own data source)

data = pd.read_csv('your_data.csv') # A dataset containing features relevant to a classification task. Replace 'your_data.csv' with your own data source!

# 2. Prepare your data

X = data[['feature1', 'feature2', 'feature3']] # Features. Be sure these are relevant!

y = data['target_variable'] # Target Variable

# 3. Split your data

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.25, random_state=42)

# 4. Create and train your model

model = LogisticRegression()

model.fit(X_train, y_train)

# 5. Make predictions and evaluate

y_pred = model.predict(X_test)

accuracy = accuracy_score(y_test, y_pred)

print(f"Accuracy: {accuracy}")

print(classification_report(y_test, y_pred))

# 6. (Deployment and monitoring would involve:

# - Model Serialization: Saving the trained model to a file (e.g., using pickle or joblib) so it can be loaded later without retraining.

# - REST API Design: Creating a RESTful API endpoint using a framework like Flask or FastAPI to receive prediction requests and return model predictions in a standardized format (e.g., JSON).

# - Infrastructure: Deploying your API to a server (e.g., AWS EC2, Heroku, Google Cloud Run) and setting up monitoring to track its performance and identify issues.

# - Monitoring: Use tools like Prometheus and Grafana to check your performance and retrain the model if needed)Key Takeaways

Building an ML model is an iterative and experimental process.

The quality and preparation of your data are paramount.

Choose the algorithm that is best suited to your particular problem and data.

Consistently assess your model’s effectiveness, refining as needed.

Continuous monitoring is essential to ensure that your model continues to perform well over time.

Building a machine learning model is a rewarding journey that empowers you to address tangible challenges and unlock the power of data. Embrace the learning process, experiment with different techniques, and don’t be afraid to make mistakes. With persistence and the right resources, you can become a skilled machine learning practitioner and create innovative solutions that have a significant impact. Now, go build something amazing!

For more AI blogs visit my medium blog page: Anil Tiwari – Medium

Follow me on LinkedIn: https://www.linkedin.com/in/aniltiwari/