A Comprehensive History of Artificial Intelligence

Artificial Intelligence (AI), the pursuit of machines exhibiting human-like cognitive capabilities, is a field marked by bold ambitions and a cycle of rapid progress interspersed with periods of difficulty. The evolution from abstract theories to concrete applications has been protracted and captivating, shaped by brilliant minds, technological advances, and evolving societal expectations.

The Genesis of AI: Theoretical Underpinnings and Early Visions

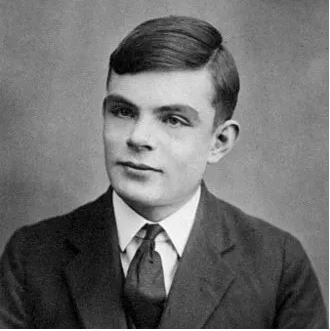

The groundwork for AI was laid in the mid-20th century with the rise of theoretical computer science and inquiries into the fundamental nature of computation. Alan Turing, a British mathematician of extraordinary intellect, stands as a seminal figure in this formative era.

Turing’s most significant contribution was the concept of the Turing machine, a theoretical model of a computational device capable of executing any calculation that can be defined algorithmically. This formalized the concepts of algorithms and computation, serving as a blueprint for the creation of general-purpose computers. Beyond his theoretical contributions, Turing was a vital participant in wartime codebreaking at Bletchley Park. His leadership was crucial in efforts to decipher German Enigma codes, heading the team that engineered the electromechanical “Bombe,” a device that dramatically accelerated the decryption process. The Enigma and Bombe machines were foundational in understanding how to process complex information, which is at the heart of current machine learning techniques.

Furthermore, Turing pondered the very essence of intelligence. His well-known “imitation game,” now called the Turing Test, suggested that a machine capable of convincingly mimicking human conversation to the point where a human evaluator cannot discern it from a real person could be considered “intelligent.” This test, still debated today, provided a tangible target for AI development and ignited discussions about consciousness and machine intelligence.

1956: The Formal Inception of AI: The Dartmouth Workshop

The year 1956 is a turning point in the historical timeline of AI: the Dartmouth Workshop. Organized by the computer scientist John McCarthy, alongside figures such as Marvin Minsky, Nathaniel Rochester, and Claude Shannon, this assembly is widely recognized as the event that formally established AI as a distinct area of scholarly pursuit.

McCarthy, a central figure in the origin of AI, is given credit for creating the term “artificial intelligence” in the proposition for the workshop. The Dartmouth Workshop united specialists from a range of fields – mathematics, psychology, computing, and linguistics – to explore the prospects of creating machines that could reason and solve problems in a manner analogous to human beings. The workshop’s intent was ambitious: to investigate methods of building machines with capacities for human-style reasoning, abstract thought, problem-solving skills, and even self-improvement. Participants envisioned machines capable of learning, interpreting language, and exhibiting inventive capabilities. McCarthy, in particular, held a strong conviction that “every facet of learning or any other element of intelligence could fundamentally be described so precisely that a machine could be created to simulate it.” This daring assertion provided the groundwork for ensuing decades of exploration and progress within the discipline.

Early Progress and Pioneering Systems: The Initial Promise

In the period after the Dartmouth Workshop, the area of AI underwent a phase characterized by swift growth and high expectations, propelled by notable achievements and the emergence of ground-breaking systems.

- 1957: The Perceptron – A Bedrock for Neural Networks: Frank Rosenblatt conceived of the perceptron, an early iteration of a neural network fashioned to replicate the framework and functioning of the human brain. The perceptron, consisting of linked binary neural components with adjustable weights, learned through adjustments in synaptic weights grounded in concrete instances. Despite limitations relative to the complexity of current neural networks, the perceptron demonstrated the potential of machine learning and laid a foundational building block for AI systems to come.

- 1963: AI Laboratories at MIT and Stanford – Hubs of Innovation: In recognizing the importance of specific research facilities, John McCarthy commenced Project MAC (later known as the MIT Artificial Intelligence Lab) at MIT in 1963. This laboratory became a center of intensive AI research, contributing substantially to varied AI-related sectors, spanning cognition, computer vision, decision theory, machine learning, and robotics. In parallel, McCarthy and Marvin Minsky founded the Stanford Artificial Intelligence Laboratory (SAIL), thereby consolidating the role of the West Coast in the AI revolution. SAIL channeled efforts into operating systems, AI, and computational theory.

- 1965: ELIZA – An Exploration of Natural Language Processing: Joseph Weizenbaum at MIT conceived ELIZA, a natural language processing (NLP) application designed to emulate a Rogerian psychotherapist. ELIZA engaged users via typed English, reacting to their statements akin to a therapist’s techniques. Weizenbaum named it after Eliza Doolittle from Pygmalion, due to the program’s ability to be “taught” to communicate increasingly effectively. Ironically, and to Weizenbaum’s surprise, some users attributed genuine comprehension to ELIZA, disclosing their thoughts in drawn-out exchanges, regardless of the program’s straightforward mechanism of parsing and rephrasing user contributions. ELIZA spotlighted the prospects and challenges in constructing machines that could comprehend and reply to human speech.

- 1966: Semantic Networks – Knowledge Representation: Ross Quillian, a PhD student, greatly contributed to knowledge representation by demonstrating the capability of semantic networks to reflect human knowledge utilizing graphs that map connections amongst words and concepts. This work was a progression toward building machines capable of not just processing information but also understanding its significance.

- 1972: WABOT-1 – The Initial Humanoid Robot: Waseda University in Tokyo presented WABOT-1, acknowledged as the initial full-scale intelligent humanoid robot. WABOT-1 was designed to walk, grip objects, articulate and comprehend Japanese, and utilize visual and auditory sensors to assess distances. This pioneering accomplishment manifested the integration of various AI capabilities into a unified physical framework.

- 1980: Expert Systems – Encoding Expertise: Edward Feigenbaum, frequently referred to as the “father of expert systems,” pioneered the formulation of systems capable of rendering decisions akin to human experts by utilizing rules applied to a repository of knowledge. These expert systems were implemented across diverse domains, spanning medical diagnostics and financial evaluations, thereby showcasing the pragmatic applications of AI in addressing particular problem areas.

- 1980: The Neocognitron – A Preliminary Deep Learning Model: Kunihiko Fukushima unveiled the neocognitron, an intricate convolutional neural network geared to identifying visual patterns grounded in geometrical similarity, irrespective of their location within a visual scene. This work laid the groundwork for contemporary deep learning methodologies employed in computer vision.

- 1986: Navlab – Pioneering Autonomous Driving: Engineers at Carnegie Mellon University designed Navlab, an early iteration of an autonomous vehicle outfitted with computing hardware, video peripherals, GPS, and a supercomputer. Navlab had the capacity to navigate roadways and avoid obstructions, thereby demonstrating the possibilities of AI within autonomous vehicular technology.

The AI Winters: Confronting Reality and Overcoming Obstacles

Despite the early advancements and noteworthy breakthroughs, the field of AI experienced stretches of stagnation and disappointment, recognized as the “AI Winters.” These spans were defined by diminished funding, reduced research operations, and a widespread loss of confidence in the prospects of AI.

Multiple dynamics shaped the AI Winters. Initial AI systems frequently encountered difficulties when scaled to address intricate real-world challenges. The constraints of existing hardware and algorithms became apparent, and initial optimism faded as aspirations exceeded capabilities. While effective in certain fields, expert systems demonstrated complexities and expenses in their construction and maintenance. Furthermore, AI research commonly lacked a sound theoretical substructure, thereby rendering the translation of theoretical advancements into functional applications problematic.

The initial AI Winter materialized in the mid-1970s, fueled by criticism directed at the constraints of machine translation and the substantial expenses associated with constructing expert systems. A later, more intense AI Winter unfolded in the late 1980s and early 1990s, motivated by the fall of the Lisp machine sector and the inability of expert systems to meet initial expectations. Across these times, investment in AI research waned, numerous AI laboratories were shut, and the discipline grappled with a crisis of faith.

The AI Re-emergence: A Fresh Phase of Progress

Irrespective of the challenges during the AI Winters, AI research persisted, motivated by a persistent belief in the potential of the field and reinforced by advancements in computing resources, algorithms, and data availability.

The late 1990s signified the start of a renewal in AI, impelled by numerous core factors:

- Enhanced Computing Capabilities: The rising computational capacity and affordability of computers, especially the evolution of parallel processing configurations, enabled researchers to contend with more intricate AI dilemmas.

- Algorithmic Refinements: Innovative algorithms, including support vector machines (SVMs) and Bayesian networks, furnished more potent and resilient methods for machine learning.

- Data Proliferation: The boom in data, fueled by the expansion of the internet and progressively digital data, presented a rich trove of training material for machine learning algorithms.

This renaissance was notable for several pivotal milestones:

- 1997: Deep Blue Defeats Kasparov: IBM’s Deep Blue, a chess-playing computer, attained a historic victory by defeating world chess champion Garry Kasparov, exhibiting the competence of AI in strategic decision-making.

- 1997: RoboCup’s Inauguration: The RoboCup competition was launched with the aim of developing autonomous humanoid robots with the capacity to engage in soccer matches against human teams, stimulating innovation in robotics, computer vision, and AI.

- 1998: Furby’s Arrival in Residences: The introduction of Furby, an interactive robotic toy, marked a positive effort in bringing AI into domestic settings, demonstrating the aptitude of AI for both amusement and companionship.

- 2002: Roomba Automates Housework: iRobot’s Roomba, an autonomous vacuum appliance, revolutionized household cleaning by navigating rooms and circumventing hindrances, highlighting the pragmatic implementation of AI in everyday tasks.

- 2004: NASA Rovers Conduct Explorations on Mars: NASA’s Spirit and Opportunity rovers operated autonomously on the Martian landscape, performing scientific investigations and showing the sturdiness of AI in demanding conditions.

The Rise of Deep Learning and Modern AI: An Intelligence Revolution

The previous decade has witnessed a revolution within the field of AI, fueled by the ascent of deep learning, a component of machine learning utilizing artificial neural networks across multiple layers to analyze data and extrapolate complex patterns. Deep learning has accomplished noteworthy results across a spectrum of uses, encompassing computer vision, natural language processing, and voice recognition.

The principal drivers of the deep learning transformation encompass:

- Extensive Datasets: The accessibility of vast datasets has empowered deep learning algorithms to discern intricate patterns and attain unprecedented precision.

- Robust Computing Resources: Enhancements in processing capability, notably the development of GPUs (graphics processing units), have enabled the training of substantial and complex deep learning configurations.

- Algorithmic Groundbreaking: Innovative deep learning architectures, such as convolutional neural networks (CNNs) and recurrent neural networks (RNNs), have proved exceptionally successful for particular activities.

The repercussion of deep learning has been profound, resulting in considerable advancements in various domains:

- Computer Vision: Deep learning has enabled machines to recognize objects, faces, and scenes with near-human precision, precipitating applications such as autonomous vehicles, facial recognition systems, and image search functionalities.

- Natural Language Processing: Deep learning has enabled machines to comprehend, generate, and translate human languages with enhanced fluidity and exactness, giving rise to applications such as chatbots, machine translation tools, and sentiment analysis methodologies.

- Voice Recognition: Deep learning has enabled machines to transcribe speech with high precision, leading to applications such as voice-operated assistants, dictation applications, and automated transcription services.

Enterprises spanning Google, Amazon, Baidu, Facebook, and Microsoft have invested extensively in deep learning research and development, leveraging these technologies to craft innovative offerings. Research proceeds within domains encompassing computer vision, natural language processing, robotics, and pattern detection, propelling the frontiers of AI and exploring prospective prospects.

The Course Ahead for AI: Possibilities and Hurdles

The terrain of AI is swiftly progressing, containing the prospect of converting facets of society, from healthcare and education to transit and industry. Even so, the growth and use of AI also brings forth ethical and communal quandaries that need resolution.

The future course for AI will likely comprise:

- Heightened AI Systems: Consistent advancements in computing resources and algorithms will conduce the creation of more sophisticated AI frameworks capable of tackling progressively elaborate quandaries.

- Extensive AI Incorporation: AI will increasingly embed itself within facets of daily existence, underpinning smartphones and vehicles to medical apparatus and fiscal systems.

- Ethical and Social Deliberations: As AI becomes exceedingly capable and pervasive, it is vital to tackle the ethical and societal ramifications of AI, encompassing quandaries such as bias, impartiality, transparency, and accountability.

The journey of AI, spanning theoretical concepts to operational uses, has been momentous, and the projected route for AI encompasses immense pledge. By carrying on the tasks of innovation, collaboration, and resolution of challenges, we have the potential to employ the strength of AI to shape a favorable trajectory for all.